Idea

Can we tweak an image that holds sentimental value to a user to convince them of a social cause? For example, can we convince a user of the ill effects of climate change by showing how it would affect their favorite hiking trail?

Why is this useful?

Social media puts us in a positive feedback loop of only seeing things we already care about. Can we somehow encourage users to also check out issues they may not care about yet? This work can help make a user also likely check out content about topics they don't care about (yet) in a manner that appeals to them. This is also good for business - one can potentially reach a broader audience if they can automatically tweak their content to appeal to a variety of different user-bases.

What we do? Train a GAN to apply an "intent" on an arbitrary image

We train a Generative Adversarial Network (GAN) to disentangle the concept of "intent" (eg, reverse climate change) and "content" (eg, imagery of a factory polluting the air). Then we can apply the "attribute"/"intent" of climate change on an image with a different content, eg, a specific hiking trail.

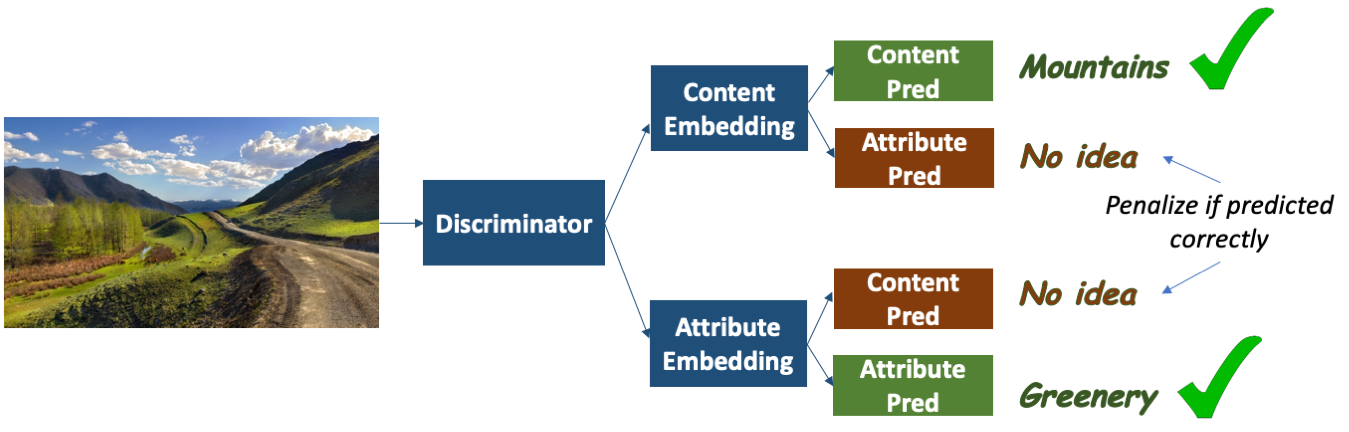

Here is how the discriminator of our GAN looks like:

We train a discriminator to disentangle the content of an image and the intent/attribute it tries to convey. Given an image, we map it to two embeddings. We try to predict the content (class of scene) and the intent ("climate change" vs "none") from each of the embeddings. For one of the embeddings, we penalize it if we can predict the content correctly, but incentivize it if we can predict the intent correctly. We do the opposite for the other embedding. We pretrain this discriminator on the SUN Datset (for various scene classes with "none" intent) and on arbitrary images on "climate change" intent scraped from the web. We use this discriminator to train a generator to generate an image with the same content as the original image, but with the intent/attribute of "climate change" in a way similar to CycleGAN.

Results

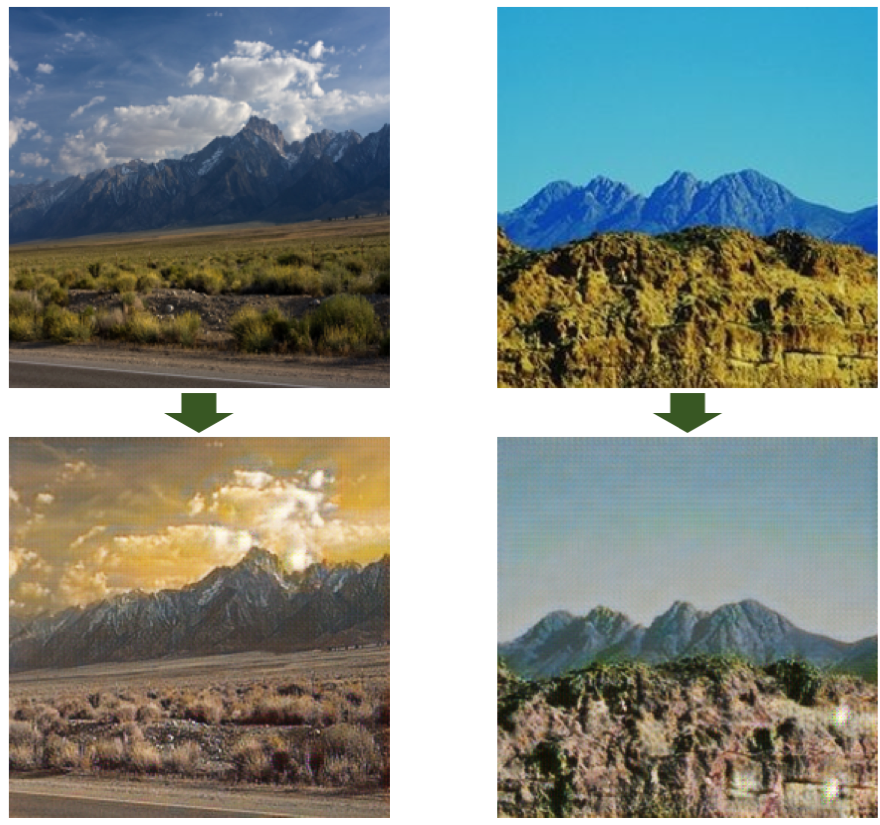

Here are some preliminary results on how our results look. The top image is the original image and the bottom image is style-transferred (generated) to convey the "effect of climate change" on the original image.

Note how the leaves/grass look dried out and the there is an overall sepia tone to the sky. None of this was explicitly taught or handcrafted! The GAN learned to make these tweaks from the data of climate change imagery on the web to convey the intent of climate change.

Patent

Limitations and dangers

We realize the potential dangers of such a technology since it can also be used for malicious intents. However, we hope that by raising awareness of the capabilities of such generative technology and the potential harms, the community can have more conversations and make informed decisions.

Footnote

I am interested in content generation for social causes. If you like this mini-project, and have other ideas you want to collaborate on, please don't hesitate to contact me!

Bibtex

@misc{divakaran2021user,

title={User targeted content generation using multimodal embeddings},

author={Divakaran, Ajay and Sikka, Karan and Ray, Arijit and Lin, Xiao and Yao, Yi},

year={2021},

month=sep # "~23",

publisher={Google Patents},

note={US Patent App. 17/191,698}}